About

edu plus now

edu plus now is an educational initiative of the Vishwakarma Group popularly known as VIT Pune. edu plus now offers online and offline courses in Artificial Intelligence, Machine Learning, and Data Science (PG in AI-ML & Data Science) and Six Sigma courses created by industry experts to help learners achieve their career potential. edu plus now provides flexible learning that fits a learner’s schedule. We believe in preparing students for success in a changing world by providing blended (Online and Offline) courses that pave the path for a successful career. Our dedicated student mentorship from industry experts, real-time projects, and case studies will provide the learners with just the right push to accelerate their careers!

By

Numbers

14+

Countries we covered in just

3 years

30+

Courses

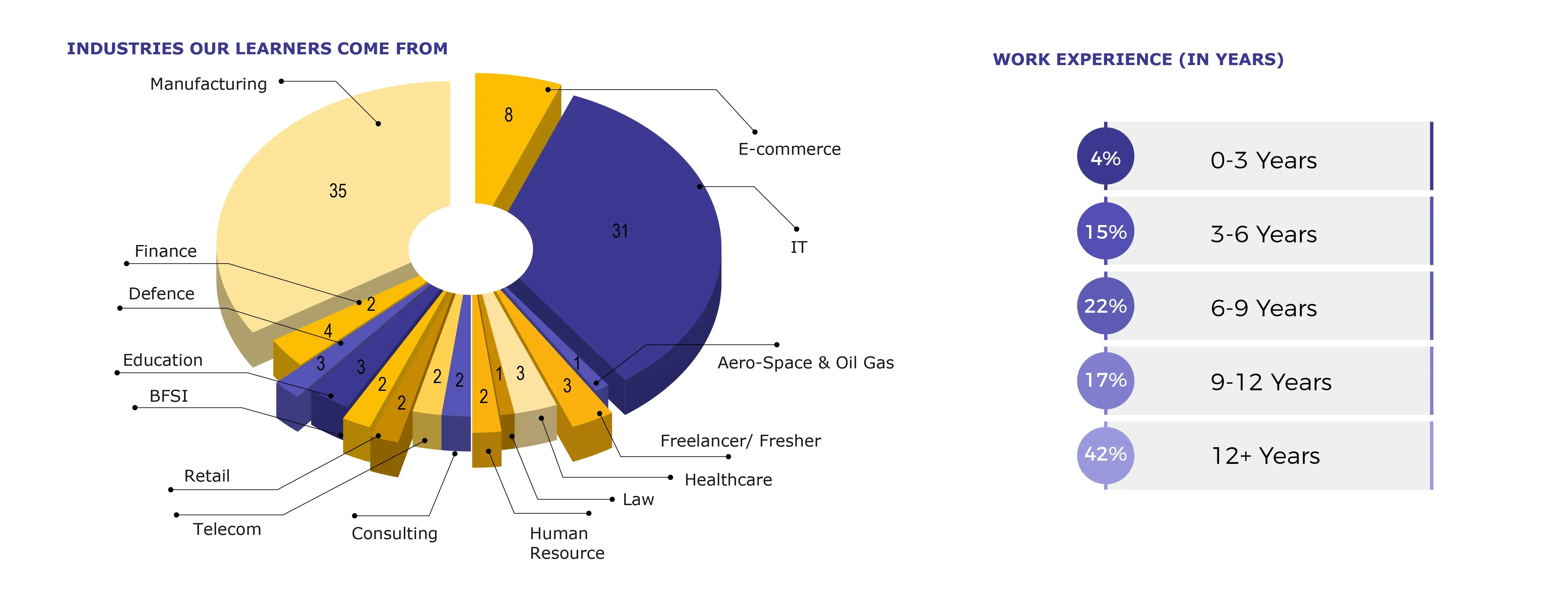

80%

Of our learners are having 12+ years of working experience

By Numbers

10+

Countries we covered in just

10 months

30+

Courses

80%

Of our learners are having 12+ years of working experience

Explore Our Courses

Duration : 4 Months

Duration : 4 Months

Duration : 3 months

Duration : 7 Months

Duration : 4 Months

Duration : 4 Months

Duration : 3 months

Duration : 7 Months

Why edu plus now

Advanced study plans

Learn complex technical skills with videos, quizzes and assignments to develop your career and build towards a degree.

Focus on target

Select the best from all the online courses in Pune that are not only informative and helpful to your long-term career goals but also help close the skill gap in the industry.

Knowledge Institute

Take advantage of a complete in-built environment for programming and get hands-on experience to solve real-world problems practically.

Thought Leadership

edu plus now enables you to make the next move in your career, promising an upgrade. Its industry-relevant curriculum will enhance your knowledge and make leadership in that area possible.

Industry-Ready Courses

Learn industry-relevant skills that’ll make your resume stand out and ensure you’re ready to tackle the job market.

Flexible Learning

Access online learning resources anywhere, anytime to gain valuable skills and transform your life in meaningful ways.

Qualified Instructors

Connect with experts and qualified instructors from reputed universities to stay on top of the ever-evolving future of work.

Advanced study plans

Learn complex technical skills with videos, quizzes and assignments to develop your career and build towards a degree.

Focus on target

Select the best from all the online courses in Pune that are not only informative and helpful to your long-term career goals but also help close the skill gap in the industry.

Knowledge Institute

Take advantage of a complete in-built environment for programming and get hands-on experience to solve real-world problems practically.

Thought Leadership

edu plus now enables you to make the next move in your career, promising an upgrade. Its industry-relevant curriculum will enhance your knowledge and make leadership in that area possible.

Industry-Ready Courses

Learn industry-relevant skills that’ll make your resume stand out and ensure you’re ready to tackle the job market.

Flexible Learning

Access online learning resources anywhere, anytime to gain valuable skills and transform your life in meaningful ways.

Qualified Instructors

Connect with experts and qualified instructors from reputed universities to stay on top of the ever-evolving future of work.

Advanced study plans

Learn complex technical skills with videos, quizzes and assignments to develop your career and build towards a degree.

Why

edu plus now

Industry-Ready Courses

Learn industry-relevant skills that’ll make your resume stand out and ensure you’re ready to tackle the job market.

Flexible Learning

Access online learning resources anywhere, anytime to gain valuable skills and transform your life in meaningful ways.

Qualified Instructors

Connect with experts and qualified instructors from reputed universities to stay on top of the ever-evolving future of work.

Advanced study plans

Learn complex technical skills with videos, quizzes and assignments to develop your career and build towards a degree.

Focus on target

Select the best from all the online courses in Pune that are not only informative and helpful to your long-term career goals but also help close the skill gap in the industry.

Knowledge Institute

Take advantage of a complete in-built environment for programming and get hands-on experience to solve real-world problems practically.

Thought Leadership

eduplusnow enables you to make the next move in your career, promising an upgrade. Its industry-relevant curriculum will enhance your knowledge and make leadership in that area possible.

Our Advisory Members

Mr. Yogesh Kulkarni

Mr. Shrikant Marathe

Mr. Hemant Joshi

Mr. Yogesh Kulkarni

Mr. Shrikant Marathe

Mr. Hemant Joshi

Mr. Yogesh Kulkarni

Our Alumni Work At

Course was designed & deployed as required & most suitable to participants. Interactive session in which ISI faculty explained DFSS & LSS has cleared some of my myths about methodology of project...

Read MoreMr. Ch Naga Chaitanya

12+ yrs of Experience, Sr. Manager Business process excellence at Ingram micro

MBB course contents are well designed by ISI-Eduplusnow which is practically applicable to all industry domains either manufacturing or service, exceeding the expectations of today's need of the...

Read MoreMr. Arpit Pandey

13+ years of Experience, Senior manager, Business Excellence at HSIL, AGI Glaspac

Highly recommended course, world class faculty and Really well organized, I look forward to enrolling in diploma courses on Data sciences when that happens. Rathsir is simply amazing, Sachin sir has...

Read MoreMr. Sudhir Pandey

15+ years Experience, Process Consultant at Wells Fargo

I would give 5 star rating based on the MBB course. The way course is covered by Prof Subrata Rath, explained with examples and in his very unique style without presentation which makes learning very...

Read MoreMr. Harpreet Singh

12+ years of Experience, Senior Manager - Service Improvement at Xerox Technology Services India

I am extremely glad that I did my MBB program from prestigious Indian Statistical Institute, Pune Chapter. Thank you Professor Rath Sir for the wonderful learning experience. You have been a great...

Read MoreMr. Kannan SP

11+ years of Experience, Deputy Manager - Continuous Improvement at Allianz

One of the best institute and faculty for practical approach of six sigma...

Read MoreMr. Surya Praksh Tiwari

18+ years of Experience, OPEX Lead at Teva API India Pvt Ltd

I am extremely happy and glad to be part of ISI's Six Sigma Master Black Belt program. It has given me immense pleasure in learning about practical applicability of Six Sigma Methodologies in various...

Read MoreMr. Viraj Chachad

18+ years of Experience, Sr. Manager (P&R Process Improvement) at Reliance Jio

The 12 days MBB training was awesome and ISI Faculty kept us engaged throughout the training with his amazing style of teaching. DFSS, Data Analytics and Python basics were the highlights for me in...

Read MoreMr. Deepak Kumar

BI Lead Working at Tata Consultancy Services Ltd. Melbourne Australia

The training had a special importance for me as it was quite a bit of time that I have not upgraded myself in my field of interest. As far as the course content and delivery is concerned it was...

Read MoreMr Hemant Shinde

AVP - Global Process Advisory, Credit Suisse

The course was planned, designed & delivered in very organized manner by ISI Faculty. ISI Faculty explained all the concepts in very detailed manner without missing on any point. He display supreme...

Read MoreMr. Suyog Chadrakant Dharmadhikari

Training & Quality Manager, Working at Tata Communications Ltd

ISI & Edu Plus has made the MBB course more effective, lively and more on personal attention. Thankful to ISI Faculty, our Six Sigma Guru. All the best !!! I have seen better coordination and...

Read MoreMr. Atul Waghmare

16+yrs Experience, Working at Infosys

Statistics is a universally applicable subject and being an avid researcher and practitioner of Indian classical dance, I wanted to learn the nuances and what better than learning from institution...

Read MoreDr Vasanth Kiran

Renowned Classical Dancer

The course seems to be well-curated as a primer for someone who wants to get started with six sigma for quality. The faculty are experts in the subject matter and are quite engaging. The course...

Read MoreSriram Ravichandran

Got a good basic idea about Six Sigma, it was a very nice experience to interact with such technically strong persons and we are so fortunate to have excellent faculties from...

Read MoreRAJESH S RAMASWAMY

QA/QC ENGINEER, BRAHMOS AEROSPACE

Happy with the training provided by both the professor. Thank you ...

Read MorePradeepkumar Ghosh

Project management, IT operations and IT vendor management & procurement

Training helped me a lot to build my basic understanding for Six sigma. It also provided me with a roadmap to start & implement the...

Read MoreNaman Agarwal

Quality Assurance-NPD Engineer

In simple words it is superb and good and informative session.. Likes a lot and good opportunity for...

Read MoreAMIT KUMAR

CONTINUOUS IMPROVEMENT - MANAGER

The course was great ! Thanks ISI and edu plus now...

Read MoreTarun Oberoi

Business Excellence Manager

Rath sir is always great in transferring knowledge. I have also attended his BB program earlier. A big thank you to Eduplus now to give us a big opportunity to...

Read MoreAnirban Gupta

CI India Head

I am really thankful to Respected Rath Sir for his extraordinary knowledge. The way he taught us is...

Read MoreUTTAM KUMAR

MANAGER - COMPONENTS SOLUTION BUSINESS

Excellent Combo of Sigma/Analytics, Industry Insights and Soft...

Read MorePradeep Sharma

Manufacturing Quality Engineer

Excellent 12 days session! Good virtual platform to learn Six Sigma...

Read MoreVishal More

Exp - 17+ experience Yrs in operations Director

Practical Examples helped to understand the concepts in a very effective way. Six Sigma is a dense jungle but teaching methods and course material made it much easier for us to cross it...

Read MoreVinita Dave

8.5 yrs experience in Manager, Lean Implementation Champion

Overall the session was wonderful interactive session with all the instructors. Especially S Rath sir, enjoyed learning with...

Read MoreAnil Tupdar

15+yrs experience in Global Service Delivery Manager R2R

ISI Faculties are really excellent and amazing. Was excellent in showing with...

Read MoreRAJADURAI ARUNASALAM

14+yrs experience in Associate Director

Subratha Rath is a powerhouse of Six Sigma and it was a pleasure and honor to be his student. Wonderful experience. Very...

Read MoreJai Gulabani

15+ Years Exp In Innowise as Vice President

Excellent training, gained lot of insights due to Rath sir. Would also like to thank edu plus team for providing videos on...

Read MoreRahul Surve

20+ Years Of Exp. In Capita As Quality Lead-Black Belt in Business Transformation

Rath Sir, Thank you so much for training. I really enjoyed it, and appreciated platform that Edu plus and Indian statistical institute has been created...its truly helpful for working professional...

Read MoreDinesh Patil

19 Years Of Exp in Varroc Lighting System

Fantabulous Training modules for an Overview of DMAIC, DFSS, LSS, Data Analytics given by best ISI...

Read MoreAditya Lodha

13 Years Exp In HIL Ltd In Operational Excellence

It was quite lot learning experience with very Good demonstrations by the...

Read MoreNagaraja Rao

Deputy General Manager -Quality. Head Quality Assurance at Mando Automotive India Limited

A great course with wonderful teachers who taught us with practical examples which helped for better...

Read MorePrathamesh Halagi

1st Development Engineer at Done Robotics Ab Oy Vaasa, West and Inner Finland, Finland

I did edu plus now’s Data science course certified by Indian Statistical Institute, which helped me to learn latest technologies right from databases and analytics, statistical concepts, machine...

Read MoreShreevidya Menon

Software Programmer Zensar Technologies, Pune

I have 20 years of experience working as director in MNC. Now, I have started my own company and have started taking projects in Data Science. Edu plus now’s course in Data science certified by...

Read MorePrasad Rajadnya

Entrepreneur, Ex- Director-Finastra, Former VP-D+H

Edu plus now bridges the gap through real-time online tuition and one-on-one interaction with the faculty, enabling instant doubt clearing and providing 100% engagement. Like many of my course-mates,...

Read MoreIpsita Mondal

Bachelor in Power Engineering from Jadavpur University

Having absolutely no coding background, I was a little hesitant to sign up for this PG Data Science program. But edu plus now provides the most comprehensive curriculum of all the available data...

Read MoreSomesh Valse

Packaging Engineering Fresher

Many thanks to edu plus now team on serving us this course. When it comes to the DFSS program, we have very limited options not only in India but across the globe. Very few institutes offer quality...

Read MoreSanjay Shukla

Six Sigma Master Black Belt at Bunge

I was looking forward to learning the proactive approach of scenario creation/ re-engineering/ innovation methodologies like design for six sigma or design thinking. Finally I got my hands on the...

Read MoreKashyap Anil

Automobile Professional with hands on experience in operations, projects, plant, quality, new products, HR & customer care

Excellent learning experience and platform being created edu plus now, and a lifelong mentor Subrata Rath Sir. New professional relationships are being created with experienced colleagues. A humble...

Read MoreTimothy Lawrence

Continuous Improvement Manager, General Mills

Excellent session. Good LMS to share materials and recorded sessions helps further. Excellent organization of training. Very prompt response from edu plus now team. Thanks for all your...

Read MoreVidya K

BPI Manager, RRD Global Outsourcing Solutions

Excellent Session. Ton of learning with an amazing way of delivery. The faculty is very much knowledgeable on the topic. The LMS was super easy and convenient to navigate and...

Read MoreSatya Narayan Panda

Sr. Operation Management Staff- GE Power | Ex. L&T, Godrej & Boyce | Lean Six-Sigma Black Belt | Mid-Career Coach

Fantastic Learning session.. Very Good insights and thoughts were structurally carried out by the...

Read MoreDeepak Chandrasekar

Senior Manager at Guardian Life

It was excellent and insightful training. Great opportunity to learn from subject matter experts like Mr. Rath. It was indeed an amazing experience of learning with fun. I would like to express my...

Read MoreParamasivam K

Sr. Associate Director - Supply Chain Operations (Beverage Manufacturing) at PepsiCo

Attending this training helped me in changing my view towards improvements or process changes. Initial idea of improvement was filled with bias and assumptions without backing data. After attending...

Read MoreMr. Amit Ranjan

15+ yrs experience, Sr. Manager at Atos Syntel

The course was great, the support and faculty were well experienced and they gave clear explanations for all...

Read MoreMr. Dattatraya Bhosale

TL at Amazon

The instructors were having thorough knowledge on the topic and it helped in understanding the concepts very...

Read MoreMr. Neo Joy

14+yrs of Experience, Program Manager at Majorel

This course taught me a lot about six sigma. All professors are truly masters of stats and quality. They all are Ph.D. holders and domain...

Read MoreMr. Sukhad Pethkar

6+yrs experience, Lead Associate-Quality at WNS

I have enjoyed this course a lot and it will be useful for me in my job and my future...

Read MoreMrs. Rajashri Shendge

8+ yrs of experience, Process Analyst at Randstad India Pvt ltd

It was an excellent experience to complete the Six Sigma Green Belt as there is a perfect combination of best course management from the edu plus now team and invincible knowledge from ISI members....

Read MoreMr.Shivaji Vithalrao Sanmukhrao

7+ years of Experience, Process Engineer at Metallguss Brinschwitz GmbH, Germany

It’s a well-designed course with great content, case studies and examples. ISI Faculties made it very engaging and easy to understand & implement. Ample examples help one connect with the topics and...

Read MoreMr. Himadri Chatterjee

22+ Years of Experience, Senior Manager at Abbott India Pvt Ltd

My sincere thanks to my guruji ISI faculty for conducting the MBB sessions online so seamlessly and for great learning. And special thanks to edu plus now for organizing the training online tool....

Read MoreMr. Bala Prasad Buddiga

20+ years of Experience, Senior Quality Assurance Specialist at Heston-KUFPEC, Kuwait

As an Industrial engineering professional, I found Knowledge sharing, practical case studies, and even study material provided during the SSGB course, very practical and industry-oriented with up to...

Read MoreMr. Ajinkya Kulkarni

Associate Consultant, UMAS India Pvt. Ltd.

Thank you for a great course. The great teaching style of all the faculties with very interactive sessions. The course content has everything I expected and more. More practical and less theoretical...

Read MoreMr. Amar Tukaram Patil

Junior manager at Syngene International Ltd. Bangalore

ISI Professor is an outstanding facilitator. Heartfelt thanks to the edu plus now team for organizing such learning programs and offering the courses. The sessions were truly enlightening and helped...

Read MoreMr. Ashvinder Tiko

Assistant Manager (HR), Springer Nature

ISI Professor is an outstanding facilitator. Heartfelt thanks to the edu plus now team for organizing such learning programs and offering the courses. The sessions were truly enlightening and helped...

Read MoreDr. Chitra Joshi

23+ yrs of experience Working as Education Management EPS United Arab Emirates

Fabulous and Memorable Experience! I liked the interactive and fun learning sessions given by ISI Faculty and the amazing opportunity by ISI and edu plus...

Read MoreHarish Subramanian

B.Tech Production Engineering

All training sessions were interactive and fun learning with an enthusiastic tutor. Really it was a great experience with ISI and edu plus...

Read MoreMs. Harshada J

IMS and WCM Coordinator at Canpack India Pvt. Ltd.

It was a great learning experience, DMAIC topics were covered in such exceptional ways which were quite easy and relatable to our jobs with practical industry-level business cases. Special thanks to...

Read MoreMr. Mahesh Poojary

Quality Team Leader at Infosys ,Pune

Traning was Interactive and easy to follow,Overall it was Great...

Read MoreMs. Nikita Phutela

Business analyst at Credit Suisse

Training received from Faculty was invaluable. The way faculty has taught was so easy and understandable from the first word. Practical approach that faculty took was the thing i was looking for. I...

Read MoreMr. Rahul Jain

Working at Johson control Engineer II (Project manager)

The course was very well designed and it was delivered very well by ISI Faculty. The course covered each and everything from literature to practical experience. Live examples were taken up and those...

Read MoreMr. Rajiv Kumar Gupta

Operations Manager S&P Global Market Intelligence

Really good experience to learn from ISI faculties. I liked Concepts which have real time...

Read MoreShakir Basra L

Project Management at Emerson Automation Solutions

Fabulous and Memorable Experiance Great skill to...

Read MoreMr. Saravanan Thangavel

Production Executive,Biocon Limited, Bangalore

It is very useful course for me to gearing up my...

Read MoreMr. Shanmugam Bairan

Production Executive,Biocon Limited, Bangalore

It gives me immense pleasure to be a part of this workshop "Six Sigma Green Belt". I am completely overwhelmed the kind of learning and enriching experience I have got from this training. I must say...

Read MoreMr. Shubhankar Huddedar

Quality Lead at Infosys ,Pune

Before joining the course I was unaware about the applicability of the DMAIC in the service industry or NGOs. I had concerns if the course would help me in upscaling my skill set. After interacting...

Read MoreDr. Uma Kulkarni

MBBS, DCH & PGDHHM 23+ yr experience Proprietor of Dhrti Consulting Services

Six Sigma Black Belt training by ISI Pune facilitated by Eduplusnow helped me grasp the concepts of Six Sigma, Lean Six Sigma and DFSS at a deeper level involving requisite statistical tools &...

Read MoreMr. Kartik Menon

Deputy Manager -Project Management Office, Atos Syntel Pvt. Ltd.

It was a very very great learning experience.The way ISI Faculty coached really sparked me to do great things. Am sure that i became better version now. I am feeling proud to be connected with a real...

Read MoreMr. Naresh S

14 yrs of Experience

I have thoroughly enjoyed taking Six Sigma Black Belt Course from ISI-Pune and Eduplusnow. I got suggested for this course from one of my close friends. I was a bit hesitant as I live in the USA East...

Read MoreMs. Rupali P

Associate at Amazon,USA

It was an amazing experience to begin my journey with Indian Statistical Institute Pune Unit and Eduplusnow through the Six Sigma Black Belt course. It was a great opportunity to learn and understand...

Read MoreMr. Srijan Sil

Senior Credit Analyst, Sovereign Ratings and Country Risk Management Crisil Limited, UMAS India Pvt. Ltd.

A must course for all Data Analytics /Quality professionals/aspirants. Eduplusnow is a great platform for interaction between top faculties and students. ISI Faculty is just amazing his knowledge and...

Read MoreMr Shubrajyoti Chakravarty

Business Operation, Cognizant

I have attended the SSBB course in August 2020. I enjoyed every class. There was various learning and unlearning in Six Sigma & statistics topics. The way ISI Faculty taught, it is easy to remember....

Read MoreMr. Sumon Chakroborty

Manufacturing Advisory, Hitachi Vantara

Amalgamation of different industry demanding professional courses at edu plus now is overwhelming. The management attitude, faculty & platform is a benchmark for the online study industries. Teaching...

Read MoreMr. Suraj Mani Chaurasiya

CoE Supply Chain Management Business Processes, Bayer Pharmaceuticals, Berlin

It was an amazing course. I am able to find the difference it made in my problem solving...

Read MoreMr. Indranil Chakraborty

11+ years of Experience, Consultant at Verizon Data Service India Pvt Limited

I was Ultra fortunate to get trained by ISI Faculty for the MBB course in Nov & Dec 2020. Once in a while, you meet someone who will be very special in life for multiple reasons because of who they...

Read MoreMr. Srinivas T V

18+ Years of experience, Principal Consultant (Expert)- Service Excellence. Drive High Impact Six Sigma at HP Inc

Course was designed & deployed as required & most suitable to participants. Interactive session in which ISI faculty explained DFSS & LSS has cleared some of my myths about methodology of project...

Read MoreMr. Ch Naga Chaitanya

12+ yrs of Experience, Sr. Manager Business process excellence at Ingram micro

MBB course contents are well designed by ISI-Eduplusnow which is practically applicable to all industry domains either manufacturing or service, exceeding the expectations of today's need of the...

Read MoreMr. Arpit Pandey

13+ years of Experience, Senior manager, Business Excellence at HSIL, AGI Glaspac

Highly recommended course, world class faculty and Really well organized, I look forward to enrolling in diploma courses on Data sciences when that happens. Rathsir is simply amazing, Sachin sir has...

Read MoreMr. Sudhir Pandey

15+ years Experience, Process Consultant at Wells Fargo

I would give 5 star rating based on the MBB course. The way course is covered by Prof Subrata Rath, explained with examples and in his very unique style without presentation which makes learning very...

Read MoreMr. Harpreet Singh

12+ years of Experience, Senior Manager - Service Improvement at Xerox Technology Services India

I am extremely glad that I did my MBB program from prestigious Indian Statistical Institute, Pune Chapter. Thank you Professor Rath Sir for the wonderful learning experience. You have been a great...

Read MoreMr. Kannan SP

11+ years of Experience, Deputy Manager - Continuous Improvement at Allianz

One of the best institute and faculty for practical approach of six sigma...

Read MoreMr. Surya Praksh Tiwari

18+ years of Experience, OPEX Lead at Teva API India Pvt Ltd

I am extremely happy and glad to be part of ISI's Six Sigma Master Black Belt program. It has given me immense pleasure in learning about practical applicability of Six Sigma Methodologies in various...

Read MoreMr. Viraj Chachad

18+ years of Experience, Sr. Manager (P&R Process Improvement) at Reliance Jio

The 12 days MBB training was awesome and ISI Faculty kept us engaged throughout the training with his amazing style of teaching. DFSS, Data Analytics and Python basics were the highlights for me in...

Read MoreMr. Deepak Kumar

BI Lead Working at Tata Consultancy Services Ltd. Melbourne Australia

The training had a special importance for me as it was quite a bit of time that I have not upgraded myself in my field of interest. As far as the course content and delivery is concerned it was...

Read MoreMr Hemant Shinde

AVP - Global Process Advisory, Credit Suisse

The course was planned, designed & delivered in very organized manner by ISI Faculty. ISI Faculty explained all the concepts in very detailed manner without missing on any point. He display supreme...

Read MoreMr. Suyog Chadrakant Dharmadhikari

Training & Quality Manager, Working at Tata Communications Ltd

ISI & Edu Plus has made the MBB course more effective, lively and more on personal attention. Thankful to ISI Faculty, our Six Sigma Guru. All the best !!! I have seen better coordination and...

Read MoreMr. Atul Waghmare

16+yrs Experience, Working at Infosys

Statistics is a universally applicable subject and being an avid researcher and practitioner of Indian classical dance, I wanted to learn the nuances and what better than learning from institution...

Read MoreDr Vasanth Kiran

Renowned Classical Dancer

The course seems to be well-curated as a primer for someone who wants to get started with six sigma for quality. The faculty are experts in the subject matter and are quite engaging. The course...

Read MoreSriram Ravichandran

Got a good basic idea about Six Sigma, it was a very nice experience to interact with such technically strong persons and we are so fortunate to have excellent faculties from...

Read MoreRAJESH S RAMASWAMY

QA/QC ENGINEER, BRAHMOS AEROSPACE

Happy with the training provided by both the professor. Thank you ...

Read MorePradeepkumar Ghosh

Project management, IT operations and IT vendor management & procurement

Training helped me a lot to build my basic understanding for Six sigma. It also provided me with a roadmap to start & implement the...

Read MoreNaman Agarwal

Quality Assurance-NPD Engineer

In simple words it is superb and good and informative session.. Likes a lot and good opportunity for...

Read MoreAMIT KUMAR

CONTINUOUS IMPROVEMENT - MANAGER

The course was great ! Thanks ISI and edu plus now...

Read MoreTarun Oberoi

Business Excellence Manager

Rath sir is always great in transferring knowledge. I have also attended his BB program earlier. A big thank you to Eduplus now to give us a big opportunity to...

Read MoreAnirban Gupta

CI India Head

I am really thankful to Respected Rath Sir for his extraordinary knowledge. The way he taught us is...

Read MoreUTTAM KUMAR

MANAGER - COMPONENTS SOLUTION BUSINESS

Excellent Combo of Sigma/Analytics, Industry Insights and Soft...

Read MorePradeep Sharma

Manufacturing Quality Engineer

Excellent 12 days session! Good virtual platform to learn Six Sigma...

Read MoreVishal More

Exp - 17+ experience Yrs in operations Director

Practical Examples helped to understand the concepts in a very effective way. Six Sigma is a dense jungle but teaching methods and course material made it much easier for us to cross it...

Read MoreVinita Dave

8.5 yrs experience in Manager, Lean Implementation Champion

Overall the session was wonderful interactive session with all the instructors. Especially S Rath sir, enjoyed learning with...

Read MoreAnil Tupdar

15+yrs experience in Global Service Delivery Manager R2R

ISI Faculties are really excellent and amazing. Was excellent in showing with...

Read MoreRAJADURAI ARUNASALAM

14+yrs experience in Associate Director

Subratha Rath is a powerhouse of Six Sigma and it was a pleasure and honor to be his student. Wonderful experience. Very...

Read MoreJai Gulabani

15+ Years Exp In Innowise as Vice President

Excellent training, gained lot of insights due to Rath sir. Would also like to thank edu plus team for providing videos on...

Read MoreRahul Surve

20+ Years Of Exp. In Capita As Quality Lead-Black Belt in Business Transformation

Rath Sir, Thank you so much for training. I really enjoyed it, and appreciated platform that Edu plus and Indian statistical institute has been created...its truly helpful for working professional...

Read MoreDinesh Patil

19 Years Of Exp in Varroc Lighting System

Fantabulous Training modules for an Overview of DMAIC, DFSS, LSS, Data Analytics given by best ISI...

Read MoreAditya Lodha

13 Years Exp In HIL Ltd In Operational Excellence

It was quite lot learning experience with very Good demonstrations by the...

Read MoreNagaraja Rao

Deputy General Manager -Quality. Head Quality Assurance at Mando Automotive India Limited

A great course with wonderful teachers who taught us with practical examples which helped for better...

Read MorePrathamesh Halagi

1st Development Engineer at Done Robotics Ab Oy Vaasa, West and Inner Finland, Finland

I did edu plus now’s Data science course certified by Indian Statistical Institute, which helped me to learn latest technologies right from databases and analytics, statistical concepts, machine...

Read MoreShreevidya Menon

Software Programmer Zensar Technologies, Pune

I have 20 years of experience working as director in MNC. Now, I have started my own company and have started taking projects in Data Science. Edu plus now’s course in Data science certified by...

Read MorePrasad Rajadnya

Entrepreneur, Ex- Director-Finastra, Former VP-D+H

Edu plus now bridges the gap through real-time online tuition and one-on-one interaction with the faculty, enabling instant doubt clearing and providing 100% engagement. Like many of my course-mates,...

Read MoreIpsita Mondal

Bachelor in Power Engineering from Jadavpur University

Having absolutely no coding background, I was a little hesitant to sign up for this PG Data Science program. But edu plus now provides the most comprehensive curriculum of all the available data...

Read MoreSomesh Valse

Packaging Engineering Fresher

Many thanks to edu plus now team on serving us this course. When it comes to the DFSS program, we have very limited options not only in India but across the globe. Very few institutes offer quality...

Read MoreSanjay Shukla

Six Sigma Master Black Belt at Bunge

I was looking forward to learning the proactive approach of scenario creation/ re-engineering/ innovation methodologies like design for six sigma or design thinking. Finally I got my hands on the...

Read MoreKashyap Anil

Automobile Professional with hands on experience in operations, projects, plant, quality, new products, HR & customer care

Excellent learning experience and platform being created edu plus now, and a lifelong mentor Subrata Rath Sir. New professional relationships are being created with experienced colleagues. A humble...

Read MoreTimothy Lawrence

Continuous Improvement Manager, General Mills

Excellent session. Good LMS to share materials and recorded sessions helps further. Excellent organization of training. Very prompt response from edu plus now team. Thanks for all your...

Read MoreVidya K

BPI Manager, RRD Global Outsourcing Solutions

Excellent Session. Ton of learning with an amazing way of delivery. The faculty is very much knowledgeable on the topic. The LMS was super easy and convenient to navigate and...

Read MoreSatya Narayan Panda

Sr. Operation Management Staff- GE Power | Ex. L&T, Godrej & Boyce | Lean Six-Sigma Black Belt | Mid-Career Coach

Fantastic Learning session.. Very Good insights and thoughts were structurally carried out by the...

Read MoreDeepak Chandrasekar

Senior Manager at Guardian Life

It was excellent and insightful training. Great opportunity to learn from subject matter experts like Mr. Rath. It was indeed an amazing experience of learning with fun. I would like to express my...

Read MoreParamasivam K

Sr. Associate Director - Supply Chain Operations (Beverage Manufacturing) at PepsiCo

Attending this training helped me in changing my view towards improvements or process changes. Initial idea of improvement was filled with bias and assumptions without backing data. After attending...

Read MoreMr. Amit Ranjan

15+ yrs experience, Sr. Manager at Atos Syntel

The course was great, the support and faculty were well experienced and they gave clear explanations for all...

Read MoreMr. Dattatraya Bhosale

TL at Amazon

The instructors were having thorough knowledge on the topic and it helped in understanding the concepts very...

Read MoreMr. Neo Joy

14+yrs of Experience, Program Manager at Majorel

This course taught me a lot about six sigma. All professors are truly masters of stats and quality. They all are Ph.D. holders and domain...

Read MoreMr. Sukhad Pethkar

6+yrs experience, Lead Associate-Quality at WNS

I have enjoyed this course a lot and it will be useful for me in my job and my future...

Read MoreMrs. Rajashri Shendge

8+ yrs of experience, Process Analyst at Randstad India Pvt ltd

It was an excellent experience to complete the Six Sigma Green Belt as there is a perfect combination of best course management from the edu plus now team and invincible knowledge from ISI members....

Read MoreMr.Shivaji Vithalrao Sanmukhrao

7+ years of Experience, Process Engineer at Metallguss Brinschwitz GmbH, Germany

It’s a well-designed course with great content, case studies and examples. ISI Faculties made it very engaging and easy to understand & implement. Ample examples help one connect with the topics and...

Read MoreMr. Himadri Chatterjee

22+ Years of Experience, Senior Manager at Abbott India Pvt Ltd

My sincere thanks to my guruji ISI faculty for conducting the MBB sessions online so seamlessly and for great learning. And special thanks to edu plus now for organizing the training online tool....

Read MoreMr. Bala Prasad Buddiga

20+ years of Experience, Senior Quality Assurance Specialist at Heston-KUFPEC, Kuwait

As an Industrial engineering professional, I found Knowledge sharing, practical case studies, and even study material provided during the SSGB course, very practical and industry-oriented with up to...

Read MoreMr. Ajinkya Kulkarni

Associate Consultant, UMAS India Pvt. Ltd.

Thank you for a great course. The great teaching style of all the faculties with very interactive sessions. The course content has everything I expected and more. More practical and less theoretical...

Read MoreMr. Amar Tukaram Patil

Junior manager at Syngene International Ltd. Bangalore

ISI Professor is an outstanding facilitator. Heartfelt thanks to the edu plus now team for organizing such learning programs and offering the courses. The sessions were truly enlightening and helped...

Read MoreMr. Ashvinder Tiko

Assistant Manager (HR), Springer Nature

ISI Professor is an outstanding facilitator. Heartfelt thanks to the edu plus now team for organizing such learning programs and offering the courses. The sessions were truly enlightening and helped...

Read MoreDr. Chitra Joshi

23+ yrs of experience Working as Education Management EPS United Arab Emirates

Fabulous and Memorable Experience! I liked the interactive and fun learning sessions given by ISI Faculty and the amazing opportunity by ISI and edu plus...

Read MoreHarish Subramanian

B.Tech Production Engineering

All training sessions were interactive and fun learning with an enthusiastic tutor. Really it was a great experience with ISI and edu plus...

Read MoreMs. Harshada J

IMS and WCM Coordinator at Canpack India Pvt. Ltd.

It was a great learning experience, DMAIC topics were covered in such exceptional ways which were quite easy and relatable to our jobs with practical industry-level business cases. Special thanks to...

Read MoreMr. Mahesh Poojary

Quality Team Leader at Infosys ,Pune

Traning was Interactive and easy to follow,Overall it was Great...

Read MoreMs. Nikita Phutela

Business analyst at Credit Suisse

Training received from Faculty was invaluable. The way faculty has taught was so easy and understandable from the first word. Practical approach that faculty took was the thing i was looking for. I...

Read MoreMr. Rahul Jain

Working at Johson control Engineer II (Project manager)

The course was very well designed and it was delivered very well by ISI Faculty. The course covered each and everything from literature to practical experience. Live examples were taken up and those...

Read MoreMr. Rajiv Kumar Gupta

Operations Manager S&P Global Market Intelligence

Really good experience to learn from ISI faculties. I liked Concepts which have real time...

Read MoreShakir Basra L

Project Management at Emerson Automation Solutions

Fabulous and Memorable Experiance Great skill to...

Read MoreMr. Saravanan Thangavel

Production Executive,Biocon Limited, Bangalore

It is very useful course for me to gearing up my...

Read MoreMr. Shanmugam Bairan

Production Executive,Biocon Limited, Bangalore

It gives me immense pleasure to be a part of this workshop "Six Sigma Green Belt". I am completely overwhelmed the kind of learning and enriching experience I have got from this training. I must say...

Read MoreMr. Shubhankar Huddedar

Quality Lead at Infosys ,Pune

Before joining the course I was unaware about the applicability of the DMAIC in the service industry or NGOs. I had concerns if the course would help me in upscaling my skill set. After interacting...

Read MoreDr. Uma Kulkarni

MBBS, DCH & PGDHHM 23+ yr experience Proprietor of Dhrti Consulting Services

Six Sigma Black Belt training by ISI Pune facilitated by Eduplusnow helped me grasp the concepts of Six Sigma, Lean Six Sigma and DFSS at a deeper level involving requisite statistical tools &...

Read MoreMr. Kartik Menon

Deputy Manager -Project Management Office, Atos Syntel Pvt. Ltd.

It was a very very great learning experience.The way ISI Faculty coached really sparked me to do great things. Am sure that i became better version now. I am feeling proud to be connected with a real...

Read MoreMr. Naresh S

14 yrs of Experience

I have thoroughly enjoyed taking Six Sigma Black Belt Course from ISI-Pune and Eduplusnow. I got suggested for this course from one of my close friends. I was a bit hesitant as I live in the USA East...

Read MoreMs. Rupali P

Associate at Amazon,USA

It was an amazing experience to begin my journey with Indian Statistical Institute Pune Unit and Eduplusnow through the Six Sigma Black Belt course. It was a great opportunity to learn and understand...

Read MoreMr. Srijan Sil

Senior Credit Analyst, Sovereign Ratings and Country Risk Management Crisil Limited, UMAS India Pvt. Ltd.

A must course for all Data Analytics /Quality professionals/aspirants. Eduplusnow is a great platform for interaction between top faculties and students. ISI Faculty is just amazing his knowledge and...

Read MoreMr Shubrajyoti Chakravarty

Business Operation, Cognizant

I have attended the SSBB course in August 2020. I enjoyed every class. There was various learning and unlearning in Six Sigma & statistics topics. The way ISI Faculty taught, it is easy to remember....

Read MoreMr. Sumon Chakroborty

Manufacturing Advisory, Hitachi Vantara

Amalgamation of different industry demanding professional courses at edu plus now is overwhelming. The management attitude, faculty & platform is a benchmark for the online study industries. Teaching...

Read MoreMr. Suraj Mani Chaurasiya

CoE Supply Chain Management Business Processes, Bayer Pharmaceuticals, Berlin

It was an amazing course. I am able to find the difference it made in my problem solving...

Read MoreMr. Indranil Chakraborty

11+ years of Experience, Consultant at Verizon Data Service India Pvt Limited

I was Ultra fortunate to get trained by ISI Faculty for the MBB course in Nov & Dec 2020. Once in a while, you meet someone who will be very special in life for multiple reasons because of who they...

Read MoreMr. Srinivas T V

18+ Years of experience, Principal Consultant (Expert)- Service Excellence. Drive High Impact Six Sigma at HP Inc

Course was designed & deployed as required & most suitable to participants. Interactive session in which ISI faculty explained DFSS & LSS has cleared some of my myths about methodology of project...

Read MoreMr. Ch Naga Chaitanya

12+ yrs of Experience, Sr. Manager Business process excellence at Ingram micro

Our Learners